1. Headline & intro

Robots that can actually be trusted not to drop the box or mangle the product have been a running joke in automation for years. Generalist’s new GEN‑1 model suggests that joke is about to get old. With 99% success rates on delicate, repetitive tasks, we’re suddenly much closer to robots that can do economically useful work without armies of engineers scripting every motion. In this piece, we’ll look at what GEN‑1 really changes, why this looks like a GPT‑3 moment for physical work, and what it means for factories, warehouses, and eventually homes.

2. The news in brief

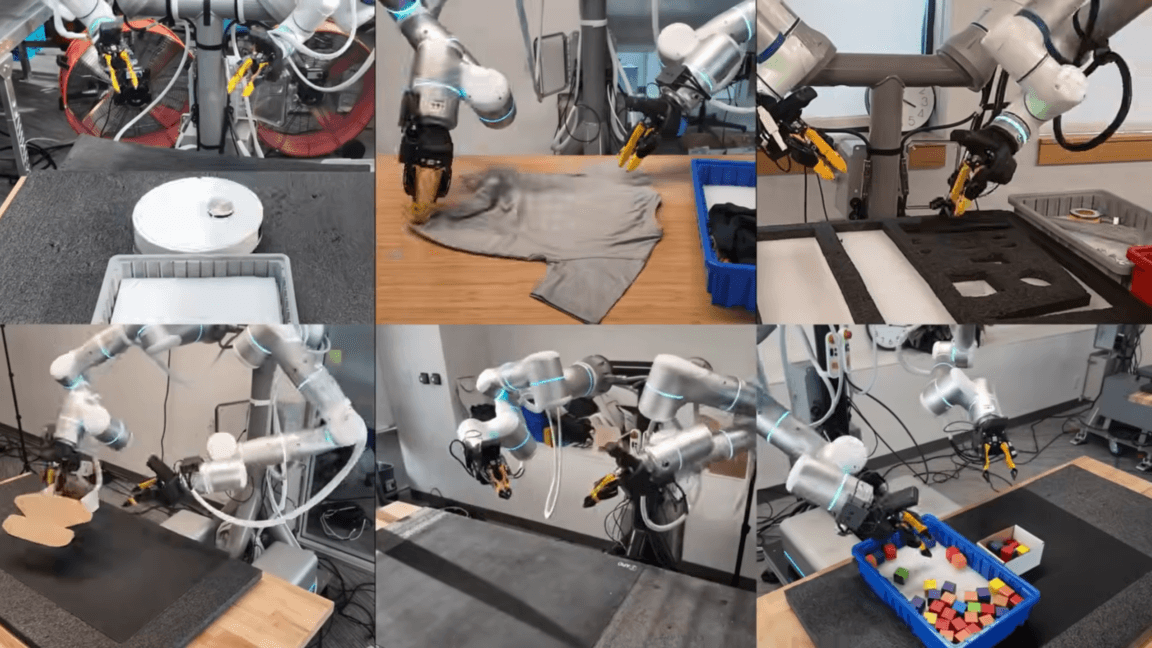

According to Ars Technica, robotics startup Generalist has unveiled GEN‑1, a new “physical AI” system trained on over 500,000 hours of human manipulation data captured with custom “data hands.” The model controls robotic hands and arms and reportedly achieves around 99% reliability on production-style tasks such as folding boxes, packing phones, sorting auto parts, and servicing robot vacuum cleaners.

GEN‑1 builds on the company’s earlier GEN‑0 model but can adapt its pretrained skills to a specific robot in about an hour of additional tuning, while operating roughly three times faster than GEN‑0. Generalist emphasizes that GEN‑1 is not just precise but also adaptive: it can respond to unexpected disturbances, recover from mistakes, and use strategies that were not explicitly demonstrated, such as shaking a bag to settle an object inside. The company argues this crosses a threshold where the system can start to be deployed in real industrial workflows.

3. Why this matters

The headline number—99% reliability—matters less as a marketing stat and more as a signal: we’re finally approaching the reliability threshold where generalist robot manipulation is cheaper than highly customized automation for certain workflows.

Traditional industrial robots excel at rigid, pre‑programmed motions: weld this seam, move this car door along that spline, repeat for ten years. The moment you ask them to handle deformable objects (clothes, cables, plastic bags) or a variety of similar items (different phone models, packaging types), the cost of integrating and maintaining the system explodes. That’s why so many real‑world warehouses still rely on human pickers, and why laundry‑folding robots remain YouTube demos, not products.

GEN‑1 attacks this pain point directly. If a single model can be dropped into different robot bodies, adapt in an hour, and handle a family of related tasks with 99% success, the economics change. Instead of spending months hand‑engineering a bespoke cell for one SKU, integrators could deploy a “foundation model for hands” and specialize it per client.

The winners in the short term are:

- 3PLs and e‑commerce warehouses that juggle constantly changing product catalogs.

- Contract manufacturers who assemble short runs for many customers.

- Robotics integrators who can productize their expertise instead of starting from scratch on each project.

Potential losers:

- Low‑skill manual workers in logistics, light assembly, and rework stations.

- Vendors of narrow, single‑purpose robots, whose main moat has been hard‑earned reliability.

There’s also a subtler shift: if robots can recover from mistakes without explicit programming, engineering moves from “specify every motion” to “specify goals and constraints.” That’s the same leap we saw with large language models—and it tends to favor players who own data and compute, not those who write traditional control code.

4. The bigger picture

GEN‑1 fits into a broader race to bring foundation‑model thinking into the physical world. We’ve already seen several approaches:

- Google’s Gemini Robotics work combines large visual‑language models with motion planners to execute high‑level natural language commands.

- Startups like Physical Intelligence focus on dexterous manipulation in simulated homes, then transfer those skills to real robots.

- Tesla’s Optimus pursues the humanoid form factor first, but, by the company’s own admission, still isn’t doing useful work at scale.

Generalist is taking a more pragmatic route: don’t start with a walking humanoid, start with reliable hands that can do economically valuable tasks at fixed stations. It’s closer in spirit to what companies like Covariant or Intrinsic (Alphabet) have been trying in warehouses and manufacturing cells.

Historically, every major shift in automation has followed a similar arc: from rigid, script‑based systems to probabilistic, data‑driven ones. In software, that was the jump from expert systems to machine learning, and later to generative AI. In robotics, we’ve had decades of impressive demos but brittle deployment. If GEN‑1’s claims hold under independent scrutiny, we may finally be crossing from “impressive demo” to “boring, reliable tool,” and boring is exactly what factories pay for.

The comparison with GPT‑3 is tempting and not entirely hype. GPT‑3 didn’t solve language, but it reached a quality level where thousands of startups could build products on top without being NLP research labs. A manipulation model that’s good enough for many production tasks could have the same platform effect for physical automation.

5. The European / regional angle

For Europe, this kind of technology hits a very sensitive intersection: an ageing workforce, a strong industrial base, and some of the world’s strictest AI regulation.

The EU has one of the highest densities of industrial robots per worker, particularly in Germany, Italy, and the Czech Republic. European manufacturers—especially the Mittelstand—already rely heavily on automation to stay competitive despite higher labor costs. A generalist manipulation model that can be retrofitted onto existing robot arms from KUKA, ABB, or FANUC is exactly the sort of lever many plants are looking for.

But European regulators are watching closely. Under the incoming EU AI Act, AI systems that control physical machinery in workplaces can be classified as “high‑risk,” triggering strict obligations around data governance, robustness, and human oversight. A model like GEN‑1, which learns from massive, potentially cross‑border datasets of human workers using “data hands,” will raise questions:

- Who owns the motion data of workers—employers, platform providers, or workers themselves?

- How are recordings anonymized, especially when captured in real factories or warehouses subject to GDPR?

- How do you certify a learning manipulation system under the CE framework, when its behavior can evolve with updates?

For European robotics startups and integrators, there’s opportunity here. Companies in Berlin, Munich, Zurich, Barcelona, and the Nordics are already building vertical solutions on top of third‑party AI models. GEN‑1‑style systems could become a new layer in the stack—provided they can be audited, documented, and constrained to satisfy EU regulators and works councils.

6. Looking ahead

What happens next is less about flashy demos and more about deployment pragmatics.

In the next 12–24 months, expect pilot projects in:

- E‑commerce fulfillment: bin picking, returns processing, and kitting.

- Electronics assembly and rework: handling fragile, high‑value items.

- Small‑batch packaging lines where SKUs change weekly.

The key metrics to watch won’t be YouTube videos but overall equipment effectiveness (OEE) and total cost of ownership compared to a human operator. If GEN‑1‑class systems can run two or three shifts with minimal intervention and low downtime, CFOs will take notice.

Technically, several open questions remain:

- How well does the model generalize to truly novel objects and tools, not just variants of what it has seen?

- What is the failure mode of the remaining 1%—annoying retries or catastrophic damage and safety incidents?

- How frequently do models need re‑training or fine‑tuning as products, packaging, and workflows change?

There’s also a looming platform battle. Will we see a few “foundation models for hands” that every integrator licenses, or will large OEMs (think: big robot arm makers, cloud providers, or auto groups) insist on developing their own closed models? The answer will shape how much room is left for startups.

Home robots—the laundry‑folding, dishwasher‑emptying dream—will likely lag industrial use by many years. Homes are far more diverse and cluttered than warehouses, and the economics are brutal: consumers won’t pay factory‑grade prices. But every incremental improvement in manipulation and robustness in factories indirectly makes domestic robots more realistic.

7. The bottom line

GEN‑1 looks less like a sci‑fi leap and more like a quiet but crucial milestone: robot hands that are finally reliable enough for real work in messy, semi‑structured environments. If the 99% claim holds in the wild, factories and warehouses—not living rooms—will be the first to feel the impact, especially in regions like Europe that already lean on automation. The open question is who will own this new layer of physical intelligence: will it be a few global model providers, or will local players carve out regulated, trustworthy alternatives?