1. Headline & intro

Google has just made AI music a default feature, not a niche experiment. Lyria 3, its latest music model, is now built directly into the Gemini app and YouTube tools. That might sound like a fun toy for 30‑second jingles, but it’s something bigger: the moment AI‑generated soundtracks become as casual as generating an image.

In this piece, I’ll look at what exactly Google shipped, who should be excited (and who shouldn’t), how this fits into the broader AI content race, and why regulators and European music industries will be watching very closely.

2. The news in brief

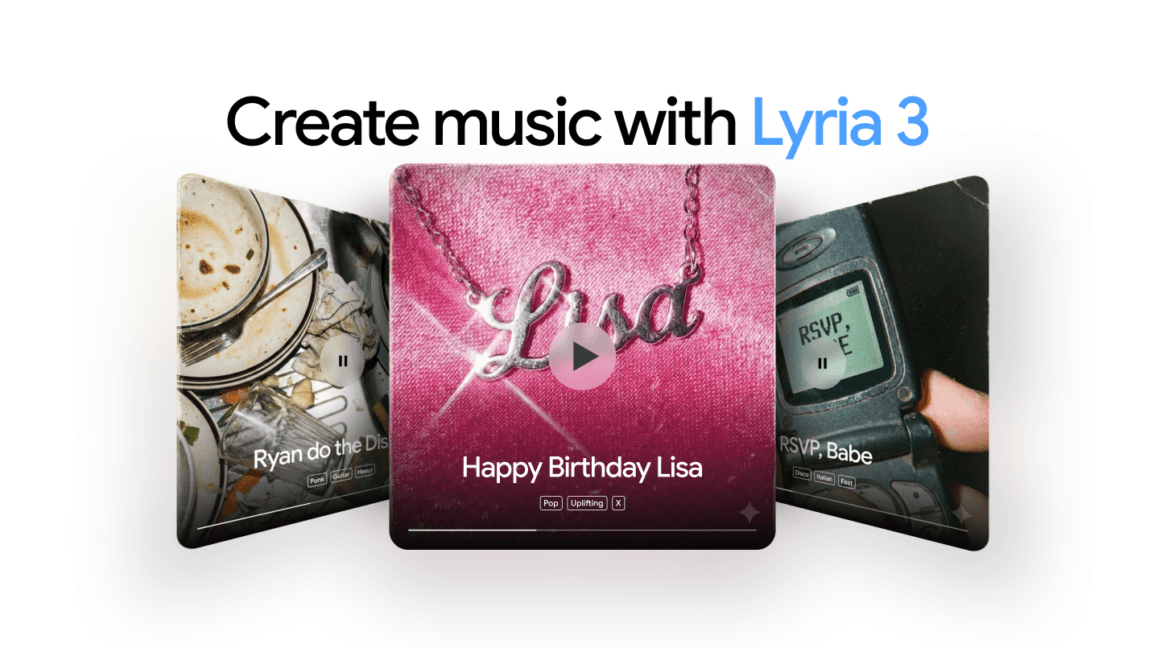

According to Ars Technica, Google is rolling out its new Lyria 3 AI music model inside the Gemini web and mobile apps starting today. Users get a "Create music" option where they can type a description, optionally upload an image as a vibe reference, and receive around 30 seconds of AI‑generated music within seconds.

Unlike earlier Google music models, Lyria 3 can generate both the instrumental and the vocals, including lyrics, even if the user doesn’t provide any text. Each generation is bundled with an AI‑made cover image produced by Google’s Nano Banana image model. Google also offers pre‑made AI tracks inside Gemini that users can remix.

The same technology is being added to Google’s Dream Track toolkit for YouTube Shorts and is meant to pair with the Veo AI video tools. Google embeds its SynthID watermark into Lyria 3 audio so Gemini can later detect whether a clip was created with Google’s AI. The feature launches with support for several major languages, with higher usage limits reserved for paying Gemini AI Pro and Ultra subscribers.

3. Why this matters

Lyria 3 is less about musical artistry and more about friction. Google is removing almost all of it. You no longer need a DAW, a microphone, or even a sense of rhythm. A vague sentence and a few seconds of waiting are enough to generate a short track, lyrics included.

That matters for three groups in particular:

- Casual creators and marketers get instant background music and hooks for Shorts, Reels, TikToks, ads, or pitch decks. For them, “good enough” is often truly good enough.

- Platforms like YouTube and Gemini gain a built‑in content pump. If users can constantly spin up new sounds, they spend more time inside Google’s ecosystem instead of going to specialized tools.

- Human musicians and smaller libraries risk being undercut. When a free, personalized jingle is one tap away, paying for stock audio or hiring a producer becomes harder to justify for many low‑budget projects.

Google’s insistence on SynthID watermarking is strategically smart but only partially reassuring. A watermark you can detect inside Gemini is useful for moderation, recommendation, and future compliance. But it doesn’t magically solve:

- Economic displacement – A flood of AI soundtracks will still compete for attention and budgets.

- Cultural homogenization – Models trained on huge mainstream catalogs tend to smooth out weirdness and local flavor.

- Attribution and compensation – Even if Lyria avoids copying named artists too literally, it still learned from someone’s work.

From a competitive standpoint, this is Google leaning on its core strengths: distribution and integration. Standalone AI‑music startups like Suno or Udio (and earlier, tools like Aiva or Amper) have to convince people to visit their site. Google simply drops a "Create music" button into an app that hundreds of millions already use. That’s a much more powerful on‑ramp.

4. The bigger picture

Lyria 3 is part of a broader pattern: generative AI is steadily marching across media types. We’ve already normalized AI‑written drafts and AI‑generated images; AI‑assisted video is coming fast. Music was always going to be next.

Google itself has been inching toward this moment for years – from research projects like MusicLM to early Dream Track experiments on YouTube Shorts with a small set of creators and artists. The difference now is scale and default positioning. This is not a research demo; it’s a mainstream product.

Compared to competitors, Google’s angle is less about pure fidelity and more about ecosystem lock‑in:

- Meta has its own music‑generation research but lacks a YouTube‑scale, search‑anchored platform where creators already manage their entire channel.

- Independent AI‑music tools often have impressive sound quality, yet zero distribution, no YouTube‑level audience, and no seamless tie‑in with short‑form video editing.

By pairing Lyria 3 with Veo video tools and YouTube Shorts, Google is quietly assembling a full generative pipeline: text → video → soundtrack → thumbnail, all AI‑generated, all optimized for short‑form feeds. That raises a deeper question: once the cost of creating content approaches zero, what becomes the true bottleneck – attention, trust, or originality?

Historically, every reduction in production cost has reshaped the music industry. Affordable home recording democratized releases; streaming exploded the long tail while concentrating revenue at the very top. AI‑music at Gemini scale is the next step: not just cheaper to produce, but automated by people who may not think of themselves as “making music” at all.

5. The European / regional angle

For European users and companies, Lyria 3 lands in the middle of a regulatory and cultural minefield.

The EU AI Act requires transparency about AI‑generated content and, for high‑impact models, more detail about training data. Google’s SynthID watermark and its claims about respecting copyright and partner deals look very much like a pre‑emptive adaptation to this environment. But watermarking alone won’t satisfy questions from European collecting societies about who should be paid when AI music is used commercially.

Then there is the Digital Services Act (DSA) and Digital Markets Act (DMA). YouTube is already classified as a very large online platform, with obligations around recommender transparency and systemic risk management. An influx of AI‑generated tracks on Shorts and YouTube proper could force Google to show regulators how it:

- labels AI‑generated music for users,

- prevents spammy AI uploads from gaming recommendations,

- and avoids unfairly privileging its own AI tools over European competitors.

The cultural angle is just as important. Europe cares deeply about local languages, minority cultures, and publicly funded arts. If Lyria 3 mostly reflects Anglo‑American mainstream training data, there’s a risk of further marginalizing smaller scenes – from Slovenian indie bands to Galician folk or Croatian klapa – in favor of endlessly remixable global pop tropes.

On the flip side, European creators also gain access to a free or cheap sketchpad. Songwriters can prototype arrangements, small agencies can score campaigns quickly, and regional startups can integrate Lyria outputs into apps without building their own models.

6. Looking ahead

The current 30‑second limit makes Lyria 3 feel like a “jingle engine,” but that’s unlikely to last. Technically, extending to longer compositions is a question of cost, guardrails, and business model, not feasibility.

Over the next 12–24 months, expect a few developments:

- Deeper YouTube integration. It’s almost inevitable that Shorts and maybe even regular YouTube uploads will get one‑click “Generate soundtrack in Gemini” buttons. That will accelerate AI music adoption far more than any standalone app.

- Rights deals and monetization schemes. To keep labels and publishers on board, Google will probably expand programs similar to its earlier AI music incubators – revenue sharing, opt‑in catalogs, and better controls for artists who don’t want their style mimicked.

- Policy and legal battles. European regulators will push for clearer disclosures on training data, consent mechanisms, and labeling for AI‑generated works. Court cases around music‑model training are almost guaranteed.

- Creator backlash and adaptation. Some musicians will refuse to engage; others will embrace Lyria as a co‑writing tool. The most successful will probably be those who treat AI music like a new instrument rather than a threat or a shortcut.

The biggest unknown is how audiences will react once they realize a non‑trivial share of their feeds’ soundtracks were never touched by a human hand. Will listeners care as long as the vibe fits? Or will “human‑made” become a premium label, similar to “analog” in photography or “vinyl‑only” in DJ culture?

7. The bottom line

Google putting Lyria 3 inside Gemini and YouTube is the tipping point where AI music stops being a curiosity and becomes infrastructure. It will supercharge casual creativity and flood the internet with perfectly serviceable, perfectly generic sound.

Whether this turns into a new golden age of experimentation or a race to the bottom in cultural fast food depends on how platforms, regulators, and – above all – human creators choose to respond. The question for readers is simple: when everything can be music, what will still feel worth listening to?