Headline & intro

Data centers used to be treated as an invisible part of the Internet—out of sight, out of mind, and mostly off the political radar. That era is over. AI, cloud gaming, and video streaming have turned server farms into some of the fastest‑growing loads on national grids, and suddenly voters care whether their electricity bills are subsidising Big Tech.

A new bipartisan push in Washington to force basic transparency on data‑center electricity use looks technical, even boring. It isn’t. It’s the opening move in a fight over who pays for the AI boom, how fast grids must be rebuilt, and whether policymakers are willing to regulate digital infrastructure as tightly as heavy industry.

This piece looks at what the senators are asking for, why it matters far beyond the US, and what it signals for Europe’s own data‑center politics.

The news in brief

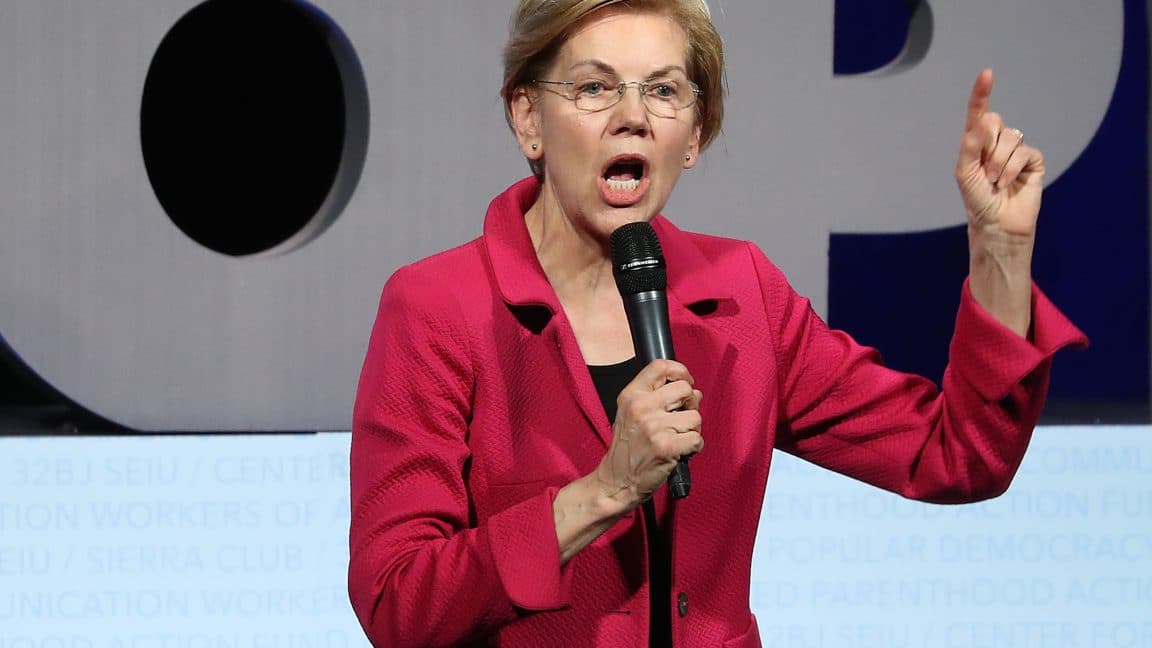

According to a report from WIRED, relayed by Ars Technica, US senators Elizabeth Warren (Democrat) and Josh Hawley (Republican) have sent a joint letter to the US Energy Information Administration (EIA), the federal body that collects and publishes energy statistics.

They urge the EIA to systematically gather and publish annual data‑center energy‑use disclosures, arguing that policymakers and grid operators lack reliable numbers on how much electricity data centers consume and where. The senators point out that other energy‑intensive industries—such as oil and gas or manufacturing—are already required to report detailed data to the EIA.

The letter lands just as the EIA launches a voluntary pilot survey of nearly 200 data‑center operators in Texas, Washington and Virginia, covering electricity consumption, energy sources, cooling and server metrics. Warren and Hawley welcome the pilot, but press the agency on whether reporting will eventually become mandatory and whether off‑grid, “behind‑the‑meter” power will be included.

The move comes amid a flurry of bills in Congress, including a proposed moratorium on new data‑center construction until AI safety rules are in place, and separate proposals to force operators to pay for their own power.

Why this matters

At first glance, asking data centers to report their electricity use sounds like bureaucratic housekeeping. In reality, it strikes at the core business model of hyperscale cloud and AI providers.

Winners:

- Grid planners and regulators gain visibility into a segment that is rapidly reshaping demand curves. Right now, utilities often rely on informal negotiations and non‑disclosure‑covered forecasts from data‑center developers. That leads to over‑ or under‑building of infrastructure.

- Households and small businesses could benefit if better data prevents utilities from gold‑plating the grid based on inflated expectations. Even industry executives have admitted that current data‑center demand forecasts can overshoot by factors of three to five.

- Smaller competitors and independent power producers may find it easier to plan investments and negotiate grid connections when the big cloud players can no longer hide behind confidentiality.

Losers:

- Hyperscalers and some colocation providers lose a key information advantage. Today they can shop multiple utilities, play them against each other with aggressive growth projections, and keep the real utilisation profile opaque.

- Data‑center‑heavy localities that have sold their voters on jobs and tax revenue might have uncomfortable conversations once the true cost in grid upgrades and capacity payments is quantified.

Crucially, this is not only an energy story; it’s a political economy story. Once hard numbers exist, it becomes far easier to impose targeted levies on large consumers, change tariff structures, or condition permits on efficiency standards. Transparency is the precondition for regulation. That’s exactly why industry has resisted it, and why this letter is a meaningful escalation.

The bigger picture

Warren and Hawley’s intervention slots into three larger trends.

1. AI as the new heavy industry.

For two decades, tech companies could claim to be “weightless” compared to steel or cement. The generative‑AI wave has shattered that illusion. Training large models and serving them at scale has energy and water footprints comparable to traditional heavy industry clusters. Policymakers are starting to treat data centers more like refineries than office parks.

2. A backlash against speculative demand inflation.

Around the world, utilities are revising demand forecasts upward, often citing AI and cloud growth. Yet many planned data‑center projects never materialise, or ramp far more slowly than initial negotiations suggested. The result: overbuilt networks, stranded grid investments, and public anger when bills rise. Transparent, audited reporting is a direct response to that credibility gap.

3. Convergence of climate and infrastructure policy.

Net‑zero targets depend on electrifying transport, heating and industry while at the same time decarbonising electricity supply. If a huge and poorly measured new demand category—AI data centers—appears in the middle of this transition, it can derail planning. In climate terms, knowing whether data‑center demand is being met with added renewables, repurposed nuclear or new gas plants is not a detail, it’s central.

Competitively, this also reshuffles the deck between regions. Countries that can offer abundant low‑carbon power plus regulatory certainty will attract the most sustainable AI build‑out. That’s why Nordic countries with strong grids and cool climates have become data‑center magnets, and why policymakers in Ireland and the Netherlands have already experimented with moratoria or strict caps.

The US is now, belatedly, debating tools—like federal‑level reporting—that the EU has already started to roll out. That’s important for European players watching how global norms on data‑center transparency emerge.

The European / regional angle

For European readers, this US fight over basic energy data may sound familiar—because it is.

The EU has already taken steps in this direction. Under the revised Energy Efficiency Directive, large data centers in the Union must submit annual information on energy use, cooling, water consumption and waste heat to an EU‑level database. The Corporate Sustainability Reporting Directive (CSRD) is pushing big tech companies, including US hyperscalers with European operations, to disclose far more on their environmental impact.

Yet implementation is patchy. National regulators in Ireland, Germany or Spain still struggle with the same questions as US utilities: how many projects are real, what’s the genuine load profile, and how do we reconcile local opposition with digital‑economy strategies?

The Warren–Hawley push matters to Europe in three ways:

- Regulatory competition. If the US moves toward EIA‑mandated reporting similar to EU requirements, European lawmakers will feel more confident tightening their own rules. The argument that strict transparency will simply “push data centers to America” becomes weaker.

- Leverage over US hyperscalers. Microsoft, Google, Amazon and others are global players. Once they have to build reporting systems for US operations, extending them to EU data becomes cheaper—and much harder to politically resist.

- A template for smaller markets. Countries like Slovenia, Croatia or Portugal, which aspire to attract niche data‑center investments without blowing up local grids, can point to converging US–EU standards as justification for demanding detailed disclosures from developers from day one.

Europe is ahead on paper, but still struggling in practice. A high‑profile US debate could paradoxically give EU regulators the political cover they need to enforce the rules they already have.

Looking ahead

The EIA’s voluntary pilot is clearly just a first step. Expect an intense lobbying battle over what comes next.

Key fault lines to watch:

- Voluntary vs. mandatory. As long as reporting is voluntary, the most aggressive AI builders have an incentive to stay quiet or cherry‑pick what they reveal. Turning surveys into compulsory, audited disclosures would be the real game changer.

- Scope of data. Will the EIA collect only grid‑sourced electricity, or also behind‑the‑meter generation such as on‑site gas turbines and renewables? Without the latter, the picture will be incomplete—and some of the most controversial projects could fall through the cracks.

- Granularity. Industry will push for aggregated, anonymised data; regulators and local communities will argue for location‑specific information to assess local impacts on substations, water resources and air quality.

Politically, bipartisan concern over bills and grid reliability gives this effort momentum. However, outright moratoria on data‑center construction, like the one proposed in the US Senate, are unlikely to become long‑term policy in major economies; the economic and strategic value of AI is simply too high.

A more realistic scenario is conditional expansion: data‑center growth allowed, but tied to strict efficiency benchmarks, firm commitments to finance new low‑carbon capacity, and detailed public reporting. Europe is already edging in that direction; the US now appears to be joining.

For businesses, the message is clear: the era of building giant server farms on the assumption that nobody will ask too many questions about their energy footprint is ending.

The bottom line

The Warren–Hawley letter is less about spreadsheets at the Energy Information Administration and more about power—both electrical and political. Once hard data on data‑center energy use is on the table, arguments over who should pay and how fast we must expand the grid will look very different.

If AI is going to be the next foundational technology, societies will have to decide how much infrastructure they’re willing to build for it, and on whose terms. Better numbers won’t settle that debate—but without them, we’re flying blind.